A team of U.S. researchers has demonstrated, for the first time in human trials, a device that reads brain signals to automatically amplify the voice a listener wants to hear — a potential lifeline for the 430 million people worldwide with disabling hearing loss.

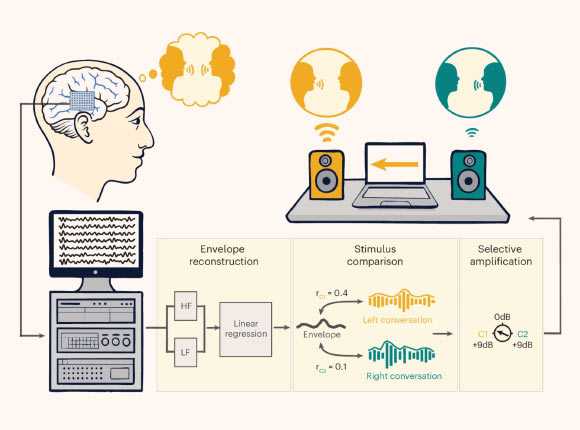

Participants with intracranial electrodes listened to two competing, spatially separated conversations. Their neural signals were recorded and fed into a real-time processing system. The system uses a linear regression model to reconstruct the temporal envelope of the attended speech from low-frequency (LF) and high-gamma (HF) neural features. The reconstructed envelope is then compared to the envelopes of the two conversations to determine the listener’s focus, which in turn drives the selective amplification of the attended speaker. Image credit: Choudhari et al., doi: 10.1038/s41593-026-02281-5.

Understanding speech in crowded environments remains one of the most difficult challenges in auditory neuroscience and hearing technology.

In these situations, listeners rely on selective attention to focus on a target talker while suppressing competing voices and background noise.

Current hearing aids often fail because they cannot infer the listener’s intent. As a result, they amplify all sounds indiscriminately, leading to degraded performance in real-world settings and contributing to low user adoption and social isolation.

“We have developed a system that acts as a neural extension of the user, leveraging the brain’s natural ability to filter through all the sounds in a complex environment to dynamically isolate the specific conversation they wish to hear,” said Dr. Nima Mesgarani, a researcher at Columbia University.

“This science empowers us to think beyond traditional hearing aids, which simply amplify sound, toward a future where technology can restore the sophisticated, selective hearing of the human brain.

In their study, Dr. Mesgarani and colleagues teamed up with surgeons and their epilepsy patients who were undergoing brain surgery to better pinpoint the sources of their seizures.

The hospital patients, who volunteered to be part of this study, already had electrodes implanted in their brains.

The team’s system used the electrodes to measure the brain activity of the patients as they focused on one of two overlapping conversations played simultaneously.

The system then automatically detected which conversation a patient was paying attention to and adjusted the volume in real time, turning up that conversation while quieting the other.

For one volunteer, the experience of controlling the system with her brain was literally unbelievable. She accused the researchers of secretly adjusting the volumes.

Others told stories about friends and family with hearing impairments who could benefit from such a technology. One person said: “It seems like science fiction.”

“The central unanswered question was whether brain-controlled hearing technology could move beyond incremental advances, towards a prototype that could help someone hear better in real time,” said Dr. Vishal Choudhari, also of Columbia University.

“For the first time, we have shown that such a system that reads brain signals to selectively enhance conversations can provide a clear real-time benefit.”

“This moves brain-controlled hearing from theory toward practical application.”

The researchers developed real-time machine-learning algorithms that could examine the brainwaves and identify which conversation the patients were paying attention to.

Once deployed, their system could rapidly deduce which conversation each listener was paying attention to and make it easier for them to hear it.

This happened both when the scientists guided the subjects toward a particular conversation, and when the subjects chose freely, as would be necessary in a real-world conversation.

“For this to work in real time, the system has to be very fast, accurate and stable for the experience to feel pleasant for the listener,” Dr. Mesgarani said.

The team’s work was published today in the journal Nature Neuroscience.

_____

V. Choudhari et al. Real-time brain-controlled selective hearing enhances speech perception in multi-talker environments. Nat Neurosci, published online May 11, 2026; doi: 10.1038/s41593-026-02281-5