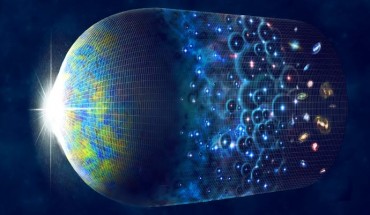

Mathematicians from University College London and the University of California, Davis, have published a mathematical proof that the Universe’s accelerating expansion can be explained without dark energy, dealing a serious blow to the Lambda-cold dark matter model — the standard cosmological model that has stood for nearly 30 years. Alexander et al. provide a mathematical proof that instabilities inherent in the Einstein-Euler equations imply...